This guest post was written by Diahala Doucouré, a content strategist who helps female and sexual health brands reach their target audiences. Her approach focuses on blending empathy and resourceful tactics to create content that resonates with the intended audience. You can find out more about her work and analysis pieces here.

ChatGPT. It has been everywhere.

That’s to say it took the internet by storm before Christmas 2022. For good reasons since the applications are endless.

ChatGPT, the latest product released by the American tech company OpenAI, works as a chatbot you interact with. Send a request asking for an explanation, and it will generate an answer for you. Want more of an elaborate answer or a specific angle? Just ask and it will build on previous answers and provide a more sophisticated response. As you converse with it, ChatGPT creates a thread, pretty much like in a conversation. It will try to understand what you’re most interested in and provide the desired output within seconds. At the time of writing this article, it is still a tool that is free to use (1).

What’s more, the generated answers look as if a human provided them. Many, including myself, were impressed by the seemingly good answers. Only in appearance, though.

As a company, it is tempting to use ChatGPT to produce all written content or at least give them a leg up for any text-based production for anything related to marketing, meaning landing pages, FAQs, Social Media posts.

However, because FemTech and SexTech are related to sensitive questions and information around health, can we, and should we, rely on ChatGPT while it is still new? Could there be pitfalls, all the more unsafe for young sectors like FemTech and SexTech?

Consumers are increasingly attentive to brand personalities, the tone of voice cohesiveness and overall communication; meaning thoughtfulness around the brand messaging becomes key. As an industry concerned with the gender data gap (i.e. the fact that we know less about female bodies because the research has mostly taken male bodies as standard for scientific studies) we need to be responsible and aware of the biases ChatGPT comes with. The sector is under scrutiny for accurate information as consumers become more “health literate”.

The companies telling better stories have an edge.

The ones that are able to master the power of words are the front-runners.

So do we really want to leave that in the “hands” of robots?

The future of content is AI-powered

Is ChatGPT able to make jokes or poems? Yes, and it seems strikingly easy to ChatGPT.

Besides all the fun, many have explored ways to integrate it into the business field, to be more time efficient when crafting a communication piece or generating blog post ideas (example1). I’ve found a handful of posts around ChatGPT in communications. One of the greatest examples was created by Shlomo Genchin who showed how to use ChatGPT to produce advertising headlines.

Regarding female health topics, the tool can be used to describe certain conditions, create content drafts (example 2), rephrase certain sentences and adapt them to your desired tone of voice. These are just a few applications that could fast-track your writing and content production process, putting the user in an editor role.

The limitations and dangers of ChatGPT health-related content

Do we want fast and digestible information? Or do we want accurate ones?

Health is one of those areas where the choice of words, the clarity and meaning have so much weight. We all want to consume accurate information reviewed by competent people. This aspect is all the more important for FemTech/ SexTech as it is a recent field that endorses a mission to bridge the knowledge and data gap. As beautiful and well-rounded as it sounds, ChatGPT has its shortcomings.

What we currently know is that ChatGPT has been trained based on a vast volume of data from before the end 2021 (2), which means it currently ignores the discoveries, content, and cultural shifts that have happened in the last year. Surely staying on top of such shifts is a critical point, isn’t it?

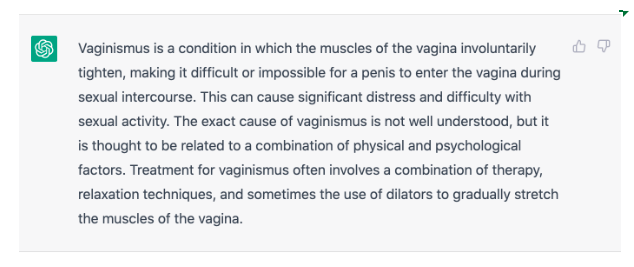

ChatGPT can’t root any of its production based on what’s happening on the internet. What we say and how we say it always changes in response to the current context; the majority of which is happening on the web. Therefore, not having internet antennae is a serious limitation for a tool designed to propel communications abilities. A good example of this is how ChatGPT assumes penetrative sex happens with a penis; whereas in the last few years we’ve become far more aware of how inclusive language is regarding sexual practices (example 2).

This becomes an even more critical problem when pulling out scientific resources. For example, when inquiring about the sources for its explanation on vaginismus (example3), it vaguely points to “general common knowledge”, “medical websites and books” but we, on the receiving end of that information, would not be able to review any of it.

On another note, if you use ChatGPT to cover a sexual health topic, you’d very quickly end up with a warning notice stating your content might violate OpenAI’s content policy if you use “sexual” or “sex work” too often (example4). I wasn’t prevented from further use of the platform. Still, I am stunned that such an advanced tool, with abilities to detect the user’s intent, wouldn’t understand you’re producing sex education content… Unless it deliberately has something against sex-positive content?

Age of AI but how do we keep it human?

No doubt, many businesses, including FemTech + Sextech will integrate more AI into their internal processes. But what’s differentiating company A from company B if the production of content becomes available to anyone? How will companies differentiate in oceans of bland, generic content?

This is where these elements come in: the brand voice, the personality, the energy that puts a whole brand in motion. All human attributes.

In all the ChatGPT frenzy, one thing stays crystal clear: AI can facilitate many things, but it cannot create and maintain the spark that links brands to consumers; AI cannot tell authentic stories, from lived experience.

We get increasingly overwhelmed by the volume of available content. At the same time, as consumers, we are also training our ability to select, discard and cut through the noise.

The coming months will show clear frontrunners, the ones that understand and refine their presence, carefully craft the touch points with community and consumers, and embody their brand in thoughtful ways.

AI is here to stay. But the human touch is what wins over and over again. How the two can work together, well, that’s what I look forward to seeing.

Example 1

Example 2

Example 3

Example 4

Sources